Testing Design: How Researchers Study Social Media

The methods and recommendations from 31 researchers who gathered at a day-long workshop.

A recent paper in the Annals of the New York Academy of Sciences lays out how researchers can study whether platform design changes make social media healthier, even without access to the platforms themselves. Co-authored by Prosocial Design Network's Julia Kamin and David J. Grüning alongside 11 other researchers, it summarises the methods and recommendations from 31 researchers from across academia, industry, and civil society who gathered at a day-long workshop convened by PDN and Google's Jigsaw unit in late 2023.

At Prosocial Design Network, we believe that independent research plays a critical role in building knowledge about prosocial design. It generates evidence that platform designs can lead to positive outcomes, points to the most effective design patterns, and gives advocacy groups and regulators a way to push for reforms from the outside. That is what drove Julia, David and Emily Saltz, then at Google's Jigsaw unit, to co-host this workshop.

For the purposes of their paper, prosocial design refers to the use of platform features, design patterns, and processes that lead to healthy interactions between users, and includes approaches that reduce harmful behavior as well as those that proactively cue and encourage prosocial engagement .

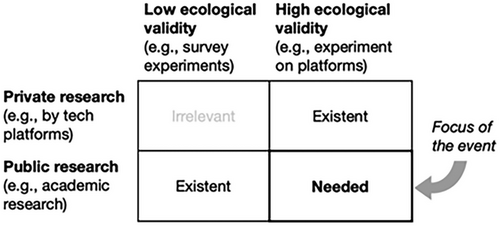

The workshop was driven by a core question: how do you test whether a potential platform design change makes a platform more prosocial when you don't work for the platform and can't access its systems? The researchers identified a few dominant research methods and described their strengths and limitations.

The fundamental tension running through all of them is between ecological validity, meaning how well a study's findings reflect what would actually happen in the real world, and everything else: the more realistic a study is, the more expensive, technically demanding, legally risky, and ethically complicated it tends to become. Each method also comes with limitations on the kinds of interventions they can test.. Finally, cost and technical complexity systematically advantage well-resourced institutions, making the whole field harder to enter for smaller or independent research groups. Below is a summary of several of the dominant approaches used in the field and additional promising methodologies:

Common Research Methods

Browser Extensions & Mobile Apps

What it is: Researchers build software that participants download, which then quietly modifies what they see on real platforms like Facebook or YouTube. It can hide divisive political content, reorder feeds, or add warning labels, while tracking how behavior changes as a result.

Strengths: This is as close to real-world testing as independent researchers can get. Participants are on actual platforms, with their real friends and feeds, so findings are more likely to reflect how people actually behave online.

Limitations: Building and maintaining this software is expensive and technically demanding. Platforms frequently update their code, which can break extensions. Recruiting participants is also costly and skewed, since people who voluntarily download a research browser extension are not your average user. There are also legal threats from platforms that argue third-party tools violate their terms of service.

Opportunities: Open-source repositories could dramatically cut development costs if research teams shared code rather than rebuilding from scratch. A centralized research center could maintain a base extension that individual studies plug into, and a shared recruitment panel could reduce costs and improve sample quality over time.

Simulated Platforms

What it is: Researchers build their own fake version of a social media platform and run participants through it to test how different design choices affect behavior. These range from basic clickable mockups to sophisticated environments with AI-generated content and users.

Strengths: Researchers have total control over the environment, making it easy to isolate exactly what is causing an effect. Studies are replicable, and offer the flexibility to experiment with platform features and designs.

Limitations: Participants know it is fake, and that changes a lot. Without their real social network, real history, and real stakes, people won't behave identically as they would on a real platform. The gap between "looks like Twitter" and "is Twitter" may be wide in practice. Research teams often build their own platform from scratch, which is expensive and makes it difficult to compare findings across studies.

Opportunities: Some efforts to create quick-start environments such as YourFeed and Truman exist, but the field would benefit from more shared, ready-to-use simulation environments and common data standards so findings can actually be compared. Feeding real platform data into simulated algorithms and content could also help close the realism gap, making simulations function more like staging environments used inside platforms themselves.

Observational Studies (Natural Experiments & Correlational Studies)

What it is: Rather than testing something new, researchers study interventions that platforms have already rolled out using real platform data. Natural experiments find stand-ins for random assignment, like a policy threshold that kicks in automatically, while correlational studies compare groups of users who happen to use features differently.

Strengths: This method has the strongest real-world validity of the three, since you are studying actual people on actual platforms responding to actual features. The research is also inherently relevant to what platforms are already doing.

Limitations: True random assignment is impossible, meaning there is always a chance something else explains the results. More critically, researchers are entirely dependent on platforms sharing data, and the trend is moving firmly toward less access, not more. Scraping data independently carries legal risk, and researchers have to trust that whatever data platforms do share is not itself biased or incomplete.

Opportunities: Regulatory pressure, particularly from legislation like the EU's Digital Services Act, could push platforms to open up more data access. If that happens, opportunities for observational studies become more plentiful. Better matching techniques and shared datasets could also help mitigate the internal validity limitations.

Other Research Methods

Beyond the three main approaches, other methods are promising and worth knowing about. These include: partnering with online community moderators to co-design real experiments inside spaces like Reddit or Wikipedia; recruiting participants directly and nudging their platform behavior via external messaging apps or surveys; creating fake accounts that interact with real users to deliver experimental treatments; using a platform's own advertising infrastructure to push nudges to real users at scale; and building fully AI-populated simulated environments where large language models stand in for human participants entirely.

Each solves a different piece of the core tradeoff. Ads infrastructure can reach huge numbers of real users but can only really deliver simple messages; sock puppet studies can test whether people respond to peer pressure online, but you could never turn that into an actual platform feature; and filling a simulation with AI users instead of real people cuts costs dramatically, but nobody is quite sure yet whether AI actually behaves the way humans do online.

Each has its niche: some trade ethical complexity for high realism, others trade realism for low cost and easy setup. In combination with the three most common methods, they point toward a future where researchers can mix and match approaches depending on what a given study actually needs.

About the Prosocial Design Network

The Prosocial Design Network researches and promotes prosocial design: evidence-based design practices that bring out the best in human nature online. Learn more at prosocialdesign.org.

Lend your support

A donation for as little as $1 helps keep our research free to the public.