What It Is

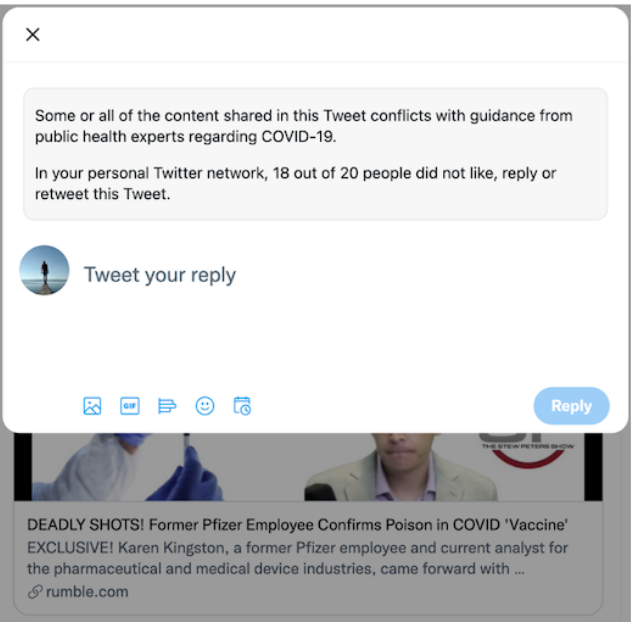

Text added to misinformation content (post or comment) that indicates the number of people in a user’s personal network who have not liked or shared the content.

Civic Signal Being Amplified

When To Use It

What Is Its Intended Impact

By shifting a user’s perceptions of the normative desirability of engaging with misinformation, Social Reference Cues lead to a reduction in re-shares and likes of misinformation content.

Evidence That It Works

Evidence That It Works

In a series of field experiments, Jones et al., (2023) tested the impact of adding a social reference cue (% of a user’s personal network who did not like or retweet a post) to content labelled as misinformation on Twitter. After downloading a browser extension created for the study, participants (a sample of active English-speaking Twitter users) were asked to spend 30 minutes on Twitter as they normally would. The extension then replaced approximately 50% of each user’s feed with misinformation (all related to Covid-19) and non-misinformation (on other topics like sports and entertainment) that the researchers sampled from Twitter. Participants were put into one of four experimental conditions varying the presence of labels in the misinformation content: a) no labels added (control); b) misinformation labels added only; c) social reference cues added only; d) social cue + misinformation labels added (combined).

In the combined condition, participants on average shared about 48% less misinformation content when compared to the control group. (Note: all effects we include are statistically significant, unless otherwise stated.) Follow-up studies indicate the social reference cues produce this result by shifting users’ perceptions of their personal network’s normative standards; in other words, they start perceiving engagement with this type of content as something their personal network would disapprove of. Importantly, they find that, while a general misinformation label by itself reduces sharing of all content, the combination of a social reference cue and a misinformation tag decreases only the sharing of false content without discouraging engagement with accurate information.

Though the study demonstrates the efficacy of social cues in reducing the sharing of misinformation content, a few limitations warrant consideration. First, the high density of misinformation in the experimental feeds and the use of simulated, uniformly high non-engagement rates may not reflect real-world platform conditions, where false content is typically scarcer and personal network behavior can vary significantly. Second, the brief 30-minute exposure period leaves open the possibility of user habituation, where the corrective impact of social cues diminishes over time as users become desensitized to the intervention.

Why It Matters

Reducing the reach of misinformation content remains a key challenge for social media platforms. While interventions like accuracy prompts and community notes have shown potential, the focus on the role of social cues and how they can shift perceptions of what content is to be avoided is a promising approach that can complement current strategies in tackling the multi-faceted issue of misinformation sharing. (Also see our primer on What We Know About Effective Misinformation Interventions.)